Plugging the Holes in the Weighted Scoring Model

For many years, we have used the weighted scoring model to help clients make decisions about project portfolio balancing, technology selection and other business decisions. Over those years, we have noticed a “hole” in the process where the results did not show the true story of the model. We noticed where the winning solution might not meet one of the critical criteria of the model. Of course, we made the client aware of the shortcoming and discussed ways to overcome the issue.

This is the second article in a series concerning the Weighted Scoring Model. The first article, What is the Weighted Scoring Method?, describes the basic model. The weighted scoring model goes by other names such as Weighted Scoring Method and Multi-Criteria Decision Analysis. If you are not familiar with this method of supporting informed decisions, read our article What is the Weighted Scoring Method?

Recently, while working with another client applying the model to their project portfolio, we felt it was time to “fix” the problem noted above. After much research via the internet and reading articles, we realized no one else mentioned this shortcoming, let alone offered a solution, thus, the motivation for writing this article – to propose a solution to the Weighted Scoring Model Hole.

PMBOK® Guide Definition

Multi-Criteria Decision Analysis: This technique utilizes a decision matrix to provide a systematic analytical approach for establishing criteria, such as risk levels, uncertainty, and valuation, to evaluate and rank many ideas.

What is the Weighted Scoring Model?

In this article, we will interchange the use of weighted scoring model, weighted scoring method and multi-criteria decision analysis to help you remember they are synonymous. Simply put, the weighted scoring method uses a set of criteria which are weighted by importance

In this article, we will interchange the use of weighted scoring model, weighted scoring method and multi-criteria decision analysis to help you remember they are synonymous. Simply put, the weighted scoring method uses a set of criteria which are weighted by importance

- high (Essential),

- medium-high,

- medium (Important),

- medium-low,

- low (Nice-to-Have), and

- Not Applicable

to compare various solutions equally. Each option is scored against the criteria or requirement using a scale of

- Exceeds criteria,

- Meets criteria,

- Supports criteria, or

- No Support.

Some people use numerical values instead of those mentioned such as 0 – 5 with 5 being the highest. We prefer the ordinal scales since participants can more easily identify with terms such as high, medium, low, or Exceeds, Meets, Supports, etc. Using a tool such as Microsoft Excel, these values are easily translated into a numeric value for easy calculations. To determine the weighted score, we simply multiply the criteria’s importance against the option’s score for that criteria and sum up the individual weighted scores for the option’s weighted score. The option with the highest value most closely aligns with the criteria list as it is ranked.

For this particular client, we chose to hide the requirements priorities so as not to bias the ratings. This particular model is rating project management software against the requirements. Additionally, the client wanted the sheet to compare one candidate at a time, rather listing all possible candidates in the same sheet. Again, they wanted unbiased results. They decided to stick with

- High,

- Medium-high,

- Medium,

- Medium-low, and

- Low

for the criteria ranking. For the rating values, they chose

- Fully Supports,

- Partially Supports, and

- Does Not Support

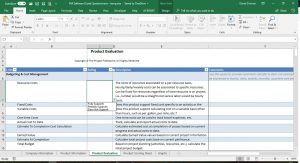

As can be seen, the ordinal scale is easily customized to fit the client’s desires. Here is a screen shot of the blank evaluation sheet.

- The requirements are categorized and listed in the first column.

- The second column lists the Ratings.

- For sanity sake, we described the requirements more fully under the Description column and

- Left room for comments in the Comments column.

Additionally, for ease of use, we used a limited drop down list for rating values so they would be consistent through the rating process. We didn’t want people to “invent” values as they rated the software invalidating the results. (click the images to enlarge). The second image illustrates completed ratings

The standard set of criteria used in the weighted scoring model provides equal evaluation for each option rather than relying on people’s opinions and gut feels. It provides objectivity to comparing the options even though the scoring of an option can be somewhat subjective. The subjectivity of the scoring is why we tell clients the weighted scoring method does not make the decision, but provides supporting evidence for making an informed decision.

The standard set of criteria used in the weighted scoring model provides equal evaluation for each option rather than relying on people’s opinions and gut feels. It provides objectivity to comparing the options even though the scoring of an option can be somewhat subjective. The subjectivity of the scoring is why we tell clients the weighted scoring method does not make the decision, but provides supporting evidence for making an informed decision.

So Where’s the Hole in the Weighted Scoring Model?

In its strength lies its weakness. As mentioned, the criteria is weighted by its importance

- Essential (high),

- Important (Medium),

- Nice-to-Have (Low), or

- Not Applicable.

The Not Applicable is used for criteria which may be used later but not applicable at this time. Few should exist at any particular time.

As mentioned earlier, the example images show the client’s decision to go with a simpler model of Fully Supports, Partially Supports, or Does Not Support for their rating values.

- Fully Supports – software supports the criteria as needed

- Partially Supports – software provides information but an additional tool may be needed, such as the software provides data in MS Excel format, but MS Excel is needed to generate graphs, charts, etc.

- Does Not Support – software has no support for that feature.

The “hole” is created when the criteria list grows long, approximately fifty items or more. With longer lists, the chances of the option gaining the most points missing an Essential criteria becomes large. As a result, the “winner” by score may be missing crucial requirements. Plugging the hole as described here highlights the Essential or Important criteria misses.

We first learned about the “hole” in the traditional Weighted Scoring Model with an early client. An international shipping company retained our services to help them select their next electronic messaging platform – a technology selection using the multi-criteria decision analysis. After collecting the requirements for the future system, we placed them in a spreadsheet, in this case, in column A. We chose three software solutions to be compared against the requirements list (the criteria). We chose this arrangement since there were many more requirements than options. Whether you arrange the option down the rows and the criteria along the columns is up to you. Either way the method still works. Listing long lists of criteria in the first column seems to work best.

As we remember, there were at least 100 requirements, maybe more. With the stakeholders, we ranked each requirement using the Essential, Important, and Nice-to-Have scale. Security was ranked high. They wanted secure login, secure messages and secure transmission to the mailbox. Each email platform was scored against the requirements and their weighted score computed. The option with the highest score scored zero (0) in all three security requirements. Because it scored well against some other requirements ranked high (essential) and medium (important), its aggregate score put it on top. Clearly, the solution did not meet all the essential requirements, especially the critical security ones.

Overcoming the Weighted Scoring Method Hole

After considering the problem, we realized each option being scored in the Multi-criteria Decision Analysis should actually receive three scores:

- The weighted score as traditionally computed,

- The highly weighted criteria alignment, and

- The top priority criteria alignment.

We already summarized the weighted score computation in this article and more fully described it in What is the Weighted Scoring Model? article mentioned earlier.

Let’s explore the other elements.

Highly Weighted Criteria Alignment

As mentioned in the introduction, an option can produce a high score due to the number of criteria being considered and the aggregate score across the criteria, giving a false sense of close alignment to the highly weighted (essential) criteria. Unfortunately, essential criteria may not be met causing improper decisions being made.

To eliminate this error, we need to consider the alignment against just the highly weighted criteria, i.e., count the number of highly weighted criteria this option met or exceeded. If the total is less than the number of highly weighted criteria, then this option missed the mark.

Since our weighted scoring model uses Microsoft Excel, we simply determine if the option scored high or meets/exceeds the criteria and if the criteria itself was rated as high (or medium-high depending on your company culture). If both are true, assign a value of 1. Add up the matches and if they equal the number of highly weighted criteria, we have a winner. If the total is less, we need to discover where this option missed the mark.

This value could be expressed in the form of a percentage. Simply divide the option’s Highly Weighted Criteria Alignment value by the total number of highly weighted criteria. If 100%, we have a complete match. If less than 100%, we need to do some research.

Top Priority Criteria Alignment

Some clients like to add an additional layer of weight on criteria they consider to be top priority. These are the handful of requirements an option must have in order to be chosen regardless of their weighted score, highly weighted criteria alignment or weighted score bucket. If the option doesn’t meet these top priority items 100%, it simply won’t do for their needs.

For example, a client might state 30 of the 50 requirements as Essential. Yet, of those 30, 10 requirements are Top Priority, more essential than just essential. The Priority ranking provides another layer of determining the option’s fit against the client’s requirements.

Similar to the Highly Weighted Criteria Alignment calculation, the worksheet determines the number of top priority items and compares it to the options that meet or exceed the criteria requirement. The counts are compared and if they are equal, the option can be considered for selection.

In the hidden sheet, our example client prioritized the requirements and assigned “Top Priority” for a few requirements. The Top Priority requirements should truly be top priority, “must haves” that without those requirements being met, the item being considered has a high probably of being rejected.

Weighted Score Bucket

The weighted score bucket is simply a visual indication of the weighted score in the form of Low, Medium, High alignment with the criteria/requirements weighting. In our models, we use a value of 75% of the total possible score as options in the highly aligned bucket, anything 35% or less in the low alignment bucket and everything in between as medium alignment. Using conditional formatting in Microsoft Excel, the cell is colored green for high, yellow for medium and red for low.

The Results

By completing the rating for all requirements, we can tally the scores and calculate the Weighted Score, Highly Weighted Criteria Alignment, Top Priority Criteria Alignment and the Weighted Bucket Score. The numerical numbers can be displayed in simple table format. In this image, the numerical scores are summarized in two ways: total scores and by requirement category.

By completing the rating for all requirements, we can tally the scores and calculate the Weighted Score, Highly Weighted Criteria Alignment, Top Priority Criteria Alignment and the Weighted Bucket Score. The numerical numbers can be displayed in simple table format. In this image, the numerical scores are summarized in two ways: total scores and by requirement category.

Since we have numbers and are driving this analysis through Microsoft Excel, we can populate graphs with the results.

Since we have numbers and are driving this analysis through Microsoft Excel, we can populate graphs with the results.

It seems we are ready to present our findings to the executives for the final decision. We have data backing up our recommendations. We know where the “failings” are for each selection prior to implementation so we can overcome them BEFORE and not AFTER the fact. And we eliminated the “gut-feel” or “uninformed intuition” method of making decisions.

Conclusion

The weighted scoring method (weighted score model, multi-criteria decision analysis) is a decision support tool. It provides valuable information about a set of solutions or options compared against a standard list of requirements (criteria). The method helps to add a level of objectivity into an otherwise subjective situation. Rather than rely on gut feel, hunches, intuition and other methods of decision-making, the data provided by the weighted score model gives credence to those other methods.

Unfortunately, many people will take the score as fact and base decisions solely on the model’s values without looking further to see if any holes exist in the logic. The result can be disastrous if these holes are not patched.

Using the described methods to check the highly weighted and top-priority criteria match provides a more robust scoring system and decision basis.

We at American Eagle Group (a.k.a, The Project Professors) develop these scoring sheets for clients. The sheets can be customized to meet the client’s needs as shown here by changing the ranking and rating “labels”. Additionally, more analysis of the results can be added to the sheets as the client directs. Contact us for more details how we can serve you for better decision making.

PMBOK® Guide is a registered trademark of the Project Management Institute. All materials are copyright © American Eagle Group. All rights reserved worldwide. Linking to posts is permitted. Copying posts is not.